When Apple introduced the iPhone in 2007, it didn't just launch a new phone. The shape of the object changed, the way it interacted with technology, the architecture of the interfaces and from that moment on, the entire sector began to adapt.

When a revolution really works, however, one specific thing often happens: after the initial break comes a long phase of stabilization. And that's exactly what happened to smartphones as well.

Today, smartphones are very powerful, refined, efficient products, perfectly inserted in increasingly integrated ecosystems. Yet if we look at them with designer eyes, we realize that the archetypal form has crystallized in an almost all-screen front, a thin body, a rectangle of glass and metal. And each new model brings only small variations, namely a new color, a different camera group, the addition of a button, a slightly thinner frame.

Innovation has not stopped, but has moved. It has been redistributed on performance, chips, photographic processing, batteries, materials, continuity between devices, services, efficiency. All real innovations, but (almost) never capable of profoundly changing the usage paradigm.

In other words, smartphones continue to improve, but they hardly force us to learn a new way of using them.

The same goes for operating systems. The first iPhone OS was revolutionary because it defined a model that is still current: home screen, icons, apps, notifications, settings, store, gestures, containers. Over the years, interfaces have become faster, cleaner, denser, more animated and more customizable, but the basic architecture has remained surprisingly stable.

In fact, the contemporary mobile operating system works mainly as an environment that organizes access to separate software, installed from a store, with fairly consistent logic from platform to platform.

The apps do the specific job, while the OS mediates, orders, notifies, authorizes and connects. It is such an effective model that it has come off the phones and has contaminated other worlds. Just look at the automotive interfaces to realize them: modular screens, icons, widgets, containers, gestures, profiles and synchronized services are increasingly reminiscent of the behavior of a smartphone.

The level of finish has changed a lot, much less the paradigm.

In recent years, the great players seem to have changed their playing field:

All very interesting, also because it tells well the contemporary tension of digital design: in a market where the basic architecture is now consolidated, the visual language is once again a very strong competitive element.

But is this enough to talk about real innovation in use? No, it's often not enough.

A more expressive UI doesn't automatically match a more transformative UI. It may be more pleasant, more recognizable, more personal and more engaging, but it doesn't necessarily change the relationship between user and system.

By now, every reflection on the future of technology inevitably involves artificial intelligence. The use of AI seemed clear years ago (even before the hype of the last 2 years): if the phone is always with us, knows the context and collects continuous input, then the intelligent assistant should have become the real center of the mobile experience.

Siri, introduced by Apple in 2011 with the iPhone 4S, was the first mainstream manifestation of this promise. Talking on the phone to activate actions, search for information, set reminders, open services: it seemed like the beginning of a new interface, but then that value proposition stopped halfway through. Siri is still useful, but rarely decisive, and it hasn't improved much over the years.

With generative AI, it finally seemed like the time had come to change pace: Apple Intelligence on the one hand, Gemini on the other, and in general a new generation of increasingly conversational and contextual assistants.

Apple presented Apple Intelligence in 2024, promising significant improvements to Siri, including more personal functionality, a better understanding of the context, and enhanced alerts. To date, however, many of these announced features have not yet been implemented or have not proved to be as impactful as Apple and we all expected.

AI has arrived on smartphones, but in most cases empowering the existing paradigm instead of replacing it. It summarizes notifications, rewrites texts, generates images, organizes information, helps with searches, interprets requests and activates functions, but it almost always continues to operate within a structure designed before AI itself.

For this reason — at least for now — the intelligent assistant is more of an additional layer than a new interaction architecture.

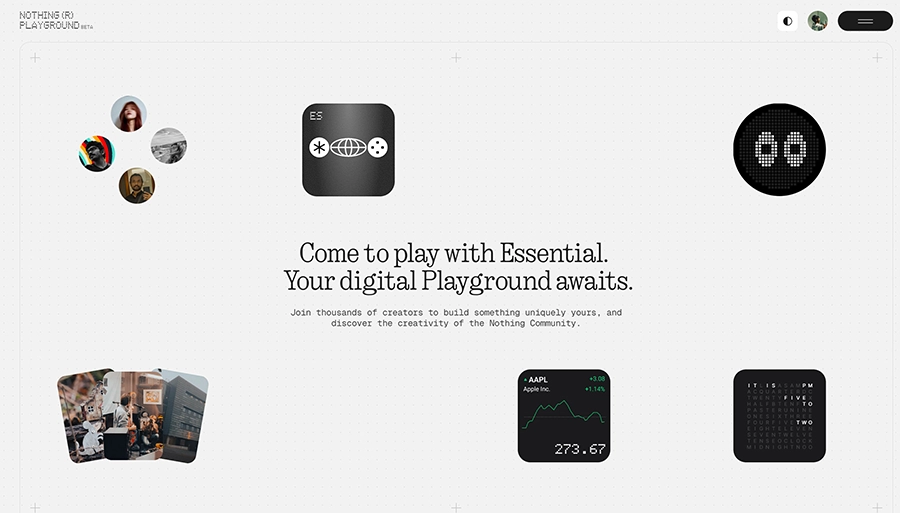

With the Nothing Playground (launched in September 2025), Nothing has started to show a different model: through a dedicated platform, users can generate mini-apps or widgets using simple text prompts. The widgets are then available to be used directly on your smartphone and can be shared with the nothing community.

The platform is functional, even if it is still in beta, and the widgets have several limitations:

These are still weak signs and not real certainties, but precisely for this reason it is worth observing them carefully: the point is not to understand if Nothing has already built the future of furniture, it is that it is making visible a design question that until now had remained mainly theoretical.

This question came up a few weeks ago, during a reflection with Chiara Pizzo, a design student at SID. Recounting her idea of a thesis inspired precisely by Nothing's personalized widgets, Chiara wondered if it was possible to generate widgets capable of optimizing the interface for the usability of older people or people with disabilities.

During the conversation, we asked ourselves:

if today we can generate customized native widgets, why couldn't the same approach be applied to the entire operating system tomorrow?

For the last fifteen years, we have lived in a simple paradigm: millions of people use the exact same app, designed once and distributed en masse. At most, you customize some details, change preferences, activate functions and move widgets. But the basic software remains the same for everyone.

Generative AI and vibe coding could start to crack this logic.

A student could generate a study tool designed on their own method.

A freelancer, a micro-tool for quotes, hours and deadlines.

An athlete, a tracker much more in line with his routine.

An older person, a more readable, essential, simplified interface.

A person with disabilities, a configuration better suited to their abilities and context.

The theme stops being tech demo and immediately becomes human. Because if today we can generate widgets, components or small flows, in the future shouldn't the same happen to ever larger portions of the interface?

For those who design, this trajectory does not come out of nowhere.

In 2011, I wrote — Ed. Chris — a thesis on dynamic visual identities, in which I highlighted an important distinction: not designing a closed form, but a system. Do not fix a single output, but define a grammar, a generative process, a set of rules capable of producing consistent variations.

Today, brought into the UX/UI, that same logic becomes even more interesting: a dynamic interface is not simply a screen that changes color, it's not a skin, it's not a dark mode and it's not even just aesthetic customization. It is a system that adapts to the context, content, capacity, objective, behavior, moment of use.

In part it already happens: the interfaces react to the device, the theme, the language, the history, the preferences, the data. Systems like Material 3 make chromatic and behavioral customization an explicit part of the framework.

Another practical example is Netflix.

Even if we often don't notice it, Netflix already personalizes an important part of the experience based on user profiling, viewing history and behavioral signals. This means that two people, at the same time, can see a different home, a different order of content and even different cover images for the exact same movie or series. Netflix has openly explained it in its Tech Blog: for many titles there are more promotional artworks and the system chooses which one to show to each user based on what is most likely to attract their attention.

Here is the real paradigm shift: no longer just adaptability decided by the company, but adaptability that can be generated even starting from the explicit need of the individual user.

If this scenario really develops, the very role of the operating system changes: in current systems, the OS manages resources, permissions, notifications, services, windows, inputs, apps; in a more advanced scenario it could also become a tool generation platform.

No longer just an environment that houses software, but also the infrastructure that helps produce it.

At that point, AI wouldn't simply be an assistant that opens apps for us,

rather a mediator between need and tool: the user expresses a need, the system interprets the context and generates a temporary, targeted, personal solution. Not perfect or definitive, but sufficient, useful, situated.

And this is where the designer's profession also changes.

Because if the interface is no longer a fixed output, then designing doesn't just mean drawing screenshots. It means defining rules, behaviors, priorities, limits, security, accessibility, consistency. In other words: no longer just composing surfaces, but designing grammars.

It will probably not be just another slightly thinner black rectangle, nor a camera moved by a few millimeters or a new glass effect described as a definitive revolution in interaction.

The future of smartphones (perhaps) will be less spectacular to see, but much more radical to design.

It could be about more fluid, adaptive, personal, and context-aware operating systems. Systems capable not only of hosting tools, but also of generating them starting from people's needs. This does not mean that the apps will disappear or that the current model is finished, nor that Nothing has already shown the definitive path.

But one thing seems to be starting to emerge: after years of stability, a small crack in the smartphone paradigm is beginning to be seen.

While we were reviewing this article before publication, during The Android Show: I/O Edition 2026, Google introduced Create My Widget, a new function that allows you to generate personalized widgets using natural language and that the company itself has defined as “the first step towards a generative UI”. Google inserts it into the new Gemini Intelligence ecosystem, imagining it not as a simple aesthetic tool, but as a way to create more personal, dynamic and useful tools directly starting from the user's needs.

Compared to Nothing's widgets, Android's approach seems to start from a deeper level of integration with the operating system.

Precisely because it relies on the Gemini infrastructure and the services of the Android ecosystem, Create My Widget seems to open up to larger scenarios: not only small personalized elements to show at home, but widgets capable of communicating with data, context and system functions in a more articulated way. It's still too early to talk about a real paradigm shift, but the signal is clear: the idea of a generative UI is no longer a theoretical suggestion or an isolated experiment, it is starting to become a concrete direction even for large operating systems.